Every internal auditor who has pasted a paragraph into ChatGPT to clean up a finding has, in that moment, made a data governance decision. Most don’t realise it. The speed and utility of large language models are extraordinary. However, our profession’s obligations around confidentiality haven’t changed. Here are some of risks that you are exposed to when using AI tools, and how to mitigate them!

What You Feed the Model

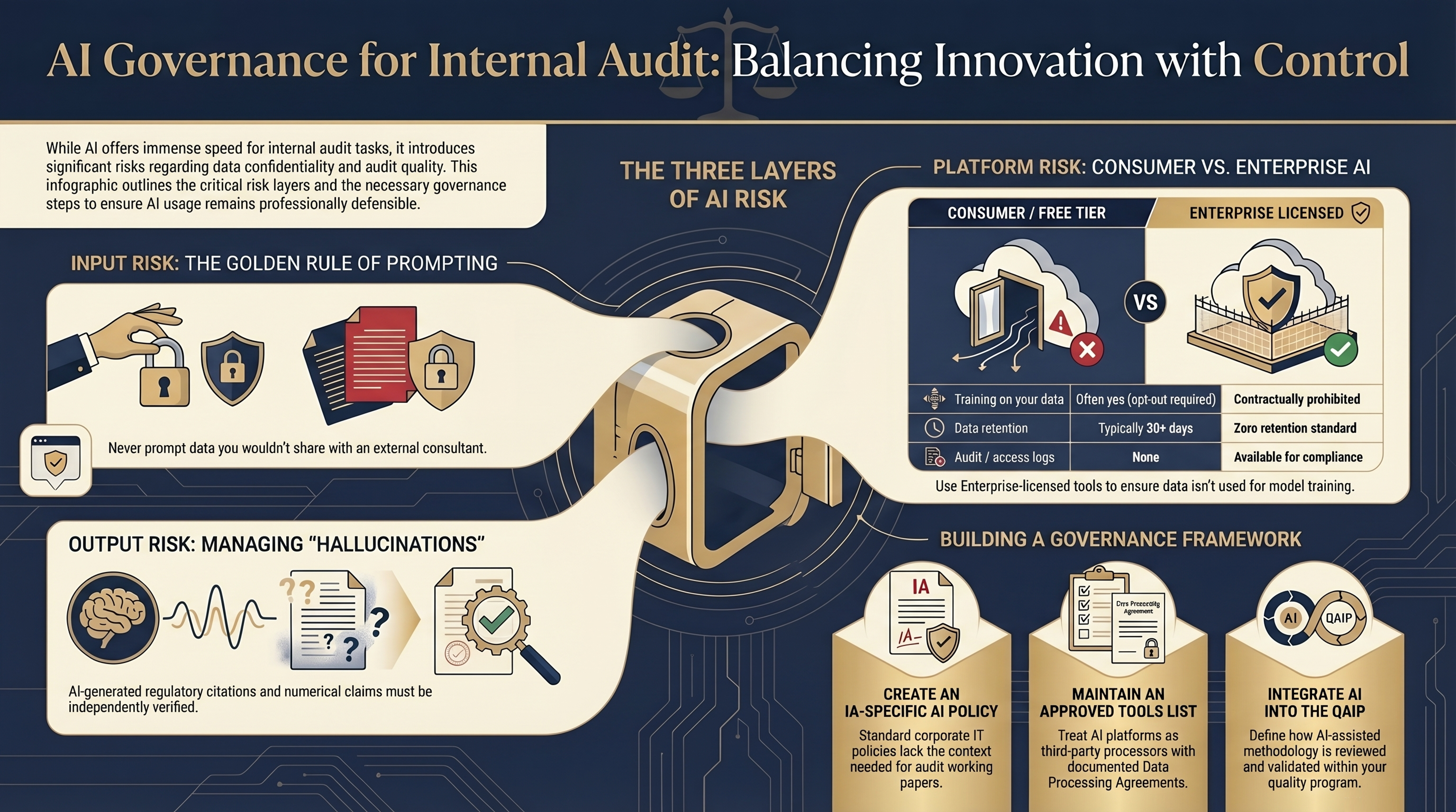

The most fundamental risk in using any LLM is the simplest one: you have to give it something to work with. Every prompt is a data transfer. The question is what data is in that transfer?

A USEFUL RULE OF THUMB:

If you wouldn’t forward the content to a capable external

consultant at a

non-affiliated firm, it shouldn’t travel to a consumer AI tool in your prompt.

The practical failure mode isn’t the auditor who sits down and thinks “should I do this?” — it’s the auditor under time pressure who copies a cell range from a trial balance into a chat window to ask for a formula. That reflex, repeated across a team, constitutes a systematic data exposure. If you were able to prompt a consumer LLM for help, imagine how much data your organisation's entire workforce can expose to such models? The solution isn’t vigilance alone; it’s policy, classification and appropriate firewalls.

Raw transaction data · Trial balances with real account names · Payroll extracts · Named personnel in disciplinary or investigation contexts · Deal-sensitive contract values · Anything subject to legal privilege · PII in any form.

Which Tool You Use

Not all AI tools carry the same risk profile. The divide between consumer-tier access (plus, pro, etc.), and enterprise-licensed deployments is not cosmetic — it is structural, contractual, and legally material.

| Risk Aspect | Consumer / Free Tier | Enterprise Licensed |

|---|---|---|

| Training on your data | Often yes (opt-out required) | Contractually prohibited |

| Data retention | 30 days+ typical | Zero retention standard |

| Audit / access logs | None | Available |

| Data residency control | None | Region-selectable |

| Data Loss Prevention | No | Possible |

| Breach notification | Terms-dependent | Contractually specified |

For organisations that have invested in enterprise AI licensing like Azure OpenAI Service, Anthropic for Business, AWS Bedrock, or equivalent, there are still specific controls worth verifying, not assuming:

Look for clarity in the DPA. “We don’t train on customer data” as a marketing statement is not the same as a contractual commitment with audit rights attached. It must be in writing in the Data Processing Agreement, with a clear definition of what “customer data” covers — including prompts and conversation logs.

Verify zero-retention claims at the architectural level. Ask whether conversation logs are stored anywhere in the processing chain — including intermediate buffers, logging pipelines, or safety classifiers. The final model may not train on your data while an intermediate system retains it for 7 days for abuse detection. Know which one applies.

Validate against data residency requirements. Most jurisdictions have data protection frameworks with cross-border transfer restrictions. An enterprise tool that processes data on an overseas server may create a compliance gap. Map your tool’s processing geography against your data policy.

Audit logging is a control, not a feature. If your enterprise tool offers interaction logging, it must be enabled. The ability to answer “what data did our team send to the AI tool in March?” is both a governance capability and an investigation tool if something goes wrong.

How You Handle the Outputs

This is the most underappreciated risk layer. Auditors focus on what they put in; they often give much less thought to what comes back out.

I recently came across 4 different articles that talked about lawyers being slapped with hefty penalties for quoting fictitious case law precedents that were generated by AI. They just didn't think of cross checking it.

And we are all guilty of sometimes not really checking what comes out of the other side of our prompt. In AI world, it not just Garbage In <> Garbage Out. Even your perfect prompts can sometimes produce unexpected results!

Hallucination is not just a technical curiosity. It is an audit quality risk. An LLM confidently fabricating a regulatory citation that ends up in a working paper — and subsequently in a report — is a professional standards failure, not a software glitch. Every substantive claim must be verified before it enters your engagement file.

Practical output controls:

- Clearly label AI-assisted content in the working paper so reviewers know what to verify independently

- Treat outputs derived from real client data under the same confidentiality classification as the source data

- Verify regulatory references, standards citations, and numerical claims in all AI-assisted drafts before sign-off

- Apply the same version control and access restriction to AI outputs as to manually prepared working papers

- Define retention and disposal rules for AI outputs that mirror your general working paper policy

The Governance Layer

Individual precautions matter, but they are not sufficient. An IA function without an explicit AI use policy is relying on auditor judgment to fill a governance gap, and judgment is not a control.

Build an AI Acceptable Usage Policy specific to Audit. The corporate IT policy is insufficient for Audit. It does not address engagement confidentiality, professional standards, working paper integrity, or the specific risk profile of using AI on investigation-related content. The IA function needs its own policy, reviewed by the CAE and ideally aligned to IIA guidance and requirements.

Maintain an approved AI tools List. Just like you maintain an inventory of approved softwares. Any AI tool touching audit data is a third-party data processor, so make sure its DPA status is documented, and usage scope well defined.

Integrate AI use into your QAIP. The Quality Assurance and Improvement Programme should include AI tool usage as a dimension, not to prohibit it, but to ensure methodology documentation reflects how AI-assisted work is validated. If a finding was drafted with AI assistance, the review standard should address what the reviewer is verifying and how.

Be especially conservative with investigations. Forensic and investigation engagements involve some of the most sensitive data an auditor handles like suspected fraud, personnel matters and details of a potential litigation. The bar for using AI tools in these contexts should be materially higher than for routine assurance work. In most cases, this means enterprise-only, zero-retention tools, or no AI in the loop at all.

Train for specific decision points. Generic “be careful with AI” training doesn’t change behavior. Auditors need a concrete decision tree: Is this task on the approved list? Am I using an approved tool? Has the data been appropriately anonymised? Is the output thouroughly reviewed? Is it going into the engagement system? These checkpoints are a must!

The Broader Point

Internal audit’s authority rests on trust. The stakeholders who share sensitive information with us - management, the board, regulators, do so because they believe we handle it with discretion and professional care. That trust cannot be delegated to a third-party model, no matter howe capable it promises to be.

AI tools are genuinely useful for the audit profession. They reduce friction in methodology work, accelerate drafting, and can surface patterns in data that no manual process would find efficiently. The question is not whether to use them... That ship has sailed! But whether you use it in a way that would survive scrutiny from the audit committee, regulators, and the clients, whose data you hold.

The precautions in this article are not obstacles to using AI. They are the conditions under which using AI is professionally defensible. And for an internal auditor, professional defensibility is not optional.

The same rigour we apply to carry out our work should apply to the AI tools we use to produce that work. The standard doesn’t change because the tool is convenient.